Digital Designer’s Creative AI Assistants – Fall 2023 edition

Here are Qvik’s top picks among current adequately mature artificial wingmans. This story guides you to tease out the best inspiration and production tips as well as traps to avoid.

Generative AI tools have quickly emerged as a new category supporting multiple creative professions. They promise that in the future, our work will be faster and more productive. According to IBM’s Chief Design Officer Billy Seabrook, many tasks along the design process might speed up to five times.

At Qvik, our digital product designers have been experimenting and reviewing several of these products. Here’s what we think about possibilities.

What counts as a generative AI tool?

The number of tools incorporating machine learning and various artificial intelligence features is constantly growing. Many simple and old tools, such as Microsoft PowerPoint, have started using modern tech solutions under the hood. Yet all software with generative AI features are not prime examples of the category.

For this story, generative AI refers to all tools that, regardless of their underlying technology, can produce content similar to human-made creations in terms of complexity and, at times, aesthetics. You could call them “creative co-pilots,” as many tech companies favor the term co-pilot when referring to these solutions. This definition excludes some AI-enabled assistant software, such as meeting note-takers or research platforms like HeyMarvin, which all utilize AI/ML features but are not intended to be similarly generative.

Furthermore, we focus on recently released tools or new features that have a chance of complementing usual designer workflows. These applications were not available until a few years ago.

Several large tool categories are consequently excluded. These include video, presentation, animation, and documentation generation tools. While each of these categories is ripe with multiple exciting applications that may be extremely valuable for some UX designers, they are outside the core of the craft.

How do you get your hands on gen AI tools?

Most of the tested tools are available online using a web browser. They usually offer a free, trial, or demo mode so you can get a feeling for their functionality without a paid license. However, you are required to use a social login or create an account.

Only a few apps, such as Photoshop, always require a desktop installation. Therefore you are encouraged to go and try them out for yourselves.

Overview of the tools we have explored

Our product designers have been trying out many tools across different varieties of creative production. We currently see the design-relevant generative AI landscape in four areas and find that there are already multiple products available in each category, as outlined in the attached table:

| Text and dialogue | Visual images | UI layouts | Figma plugins |

| ChatGPT (3.5 & 4) Perplexcity.AI Jasper.AI Copy.Ai Miro AI | Dall-E DreamStudio Midjourney Runway Adobe Photoshop AutoDraw | UIzard Visily Fronty Adalo Gamma.app Durable | Ando – Ai copilot Magestic UXpilot.ai WireGen Magician Conjure.AI QoQo.ai |

These tools can be used at several stages of the professional digital product design process: design research, concept/service design, UX design, and continuous development.

In the following, we’ll highlight the most exciting tools and their applications in each category.

| User research & benchmarking | Concept design | UI design & documentation |

| Text tools | UI layout, images | Figma plugins, images |

Text and dialogue tools top picks

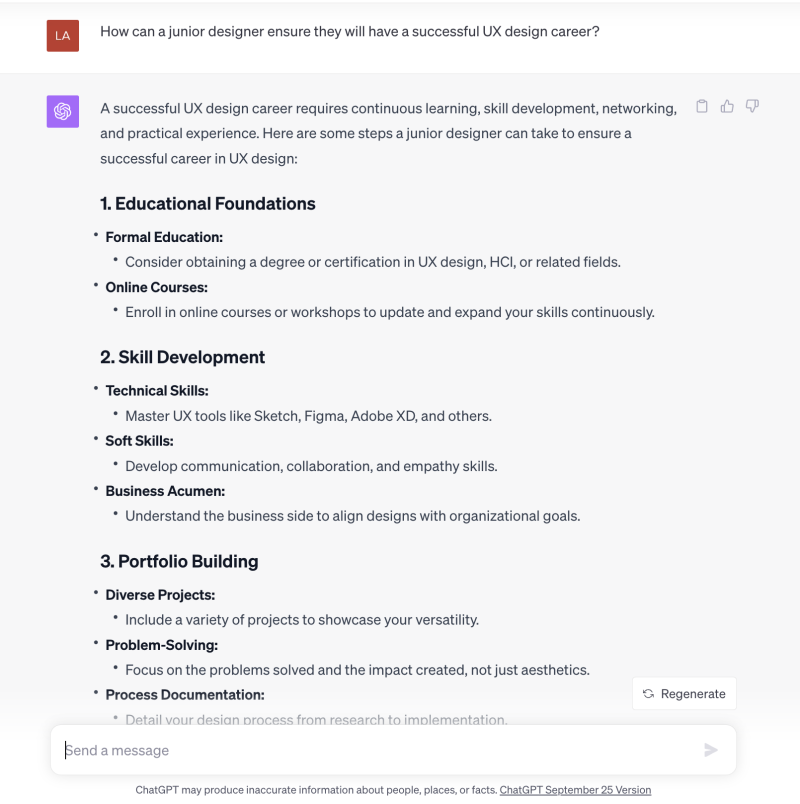

ChatGPT and Perplexity AI are currently the most prominent tools for getting textual answers from AI. The applications are similar and share underlying technology, as Perplexity is built upon the same GPT technology as ChatGPT, just a different variant. Both rely on natural language as a way to interact with them.

While intuitive in a way, as a form of interface, they also present the user with a modest cold start or blank page problem as the human needs to understand how to talk to AI. The way to interact, called “prompting,” resembles natural conversation, but the interfaces don’t feel quite finished yet.

While uncluttered text prompts can feel clear in a way Linux/Unix command line prompt does, they are also not user-friendly in guiding users to discover all available functionalities and options.

Once you get the conversation going, the wonderful and scary feature of text tools is that they can provide questions to virtually any question or challenge you can throw at them. Text AI tools can be beneficial for design research, ideation, and support idea & and problem validation.

Example use cases in which text tools can support you today:

- Benchmark existing solutions

- Assist in problem validation

- Do “synthetic” user research to help develop research guides

- List known solutions to help ideation

- Copywriting and proofreading

One of the best use cases is to think of them as untiring assistants who can always answer your stupid questions and remind you of things that may have been lost to memory because you do something so infrequently. When they complement your memory, you should recognize if the presented ideas are sensible and relevant in your current context.

For all non-native speakers, AI tools offer unprecedented spelling checking and copywriting assistance. The language they produce tends to be always grammatically correct. This functionality helps you to spot any errors. Some solutions take this further. Copy.AI promises to create full-blown content or specific text assets following the brand’s tone of voice.

Issues and limitations in text generation

Known issues with text-generating AI lie with their hallucination tendencies. While AI may produce grammatically correct and plausible-sounding responses, these responses may be total gibberish. Thus, any literal reading is strongly discouraged. Perplexity AI can help the user to do fact-checking by offering web-based references.

References are helpful, but the user must remember that those may be unreliable also. Text generating AI is best used for inquiry, not authority.

ChatGPT itself offers an insightful advise in Reid Hoffman’s GPT4 themed book:

Human beings should interact with a powerful LLM with caution, curiosity, and responsibility. A powerful LLM can offer valuable insights, assistance, and opportu- nities for human communication, creativity, and learning, but it can also pose significant risks, challenges, and ethical dilemmas for human society, culture, and values.

Text tools have limits to both their input and output. They don’t remember the entire conversation history, even if they may give a good illusion of it. Users can work around the input limitations by chunking their content, but the input buffer size currently limits their usability for larger tasks, such as translating long pieces of text.

Visual image generation top picks

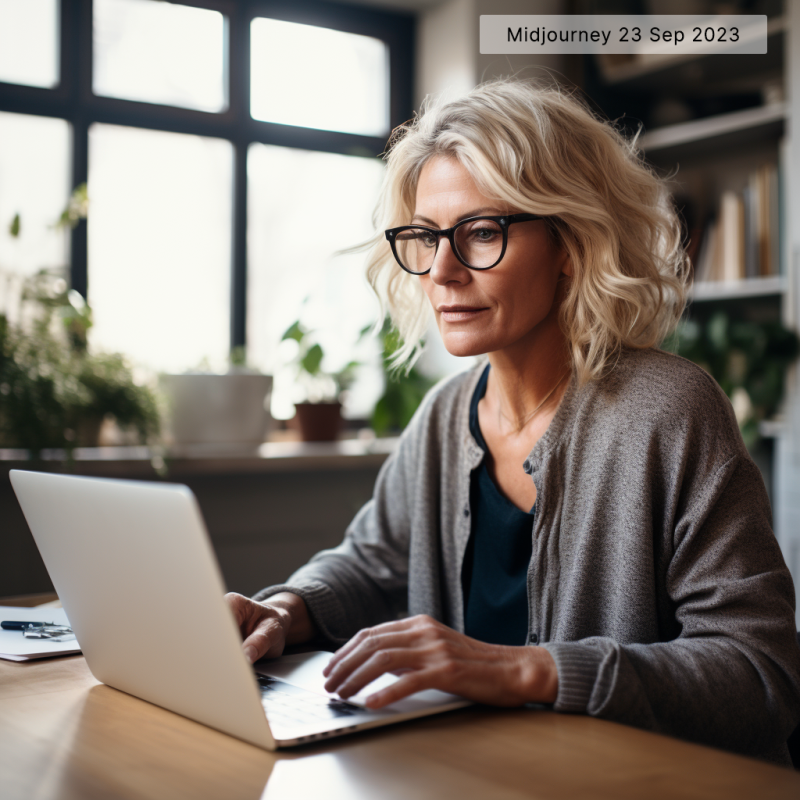

Midjourney is currently the most popular and effective image-from-text generation tool. Midjourney can already create production-quality images that can replace human illustrations in most applications. Created by a company of the same name, Midjourney is accessible through the messaging platform Discord, which is its most significant limitation, in our opinion.

The best part of image creation is that, unlike with text generation, you always see what you’ll get. There are no facts to check, a simple visual inspection will suffice to tell if the AI hit the stop or missed. Operation is simple, you first provide a prompt and then receive four or so low resolution candidates out of which you can request more variations or high resolution version.

There are several other text-to-image tools out there. Dall-E, DreamStudio, and Runway offer their own solution, each with slightly different technology features and UI. For instance, DreamStudio includes a simple way to enter negative prompts and upload images to guide image creation.

Below, you can find a collage of the different images produced with the same prompt in the three applications mentioned above. First there are low resolution preview candidates.

Low resolution image variations

Prompt: A scandinavian blonde female in her early fifties in a business attire using a mobile phone in a cafe in the evening

High resolution images

Visual imagery continues with tools supporting human visual output rather than building everything from scratch. For instance, AutoDraw can guide you to draft simple vector graphics from freehand illustrations quickly. This solution could be helpful in creating supplementary images for storyboards or similar intermediate artifacts.

You may have heard of Stable Diffusion and wonder why it wasn’t mentioned here. The reason is that Stable Diffusion is a technology that several applications, such as DreamStudio, use, but which in itself is not easy to use without some technical knowledge.

Issues and limitations in visual image generation

Known issues with image generation have to do with accuracy and false details. When it comes to fine details that matter greatly to humans but might be missed by AI, such as spelling text or numbers or the number of digits in one’s hand, the machines have been hilariously prone to failure.

Human shapes easily take an uncanny character, making them useless. Luckily, a professional designer can spot issues immediately and revise or regenerate images to get around.

Image generators currently also have built-in limitations regarding image resolution and dimensions. For instance, their output may be insufficient for some applications, as models currently produce images of at maximum 1024×1024 pixels (Midjourney and Dall-E). In some cases, you can work around this by using additional generative tools such as Adobe Photoshop 25 (2024) and its Generative Fill, which can expand your canvas area as required or use tools to upscale the image with AI.

Interacting with image-generation tools can also be frustrating. Midjourney sometimes has unexpected delays, and all applications can give you a hard time in controlling the exact content of their renderings.

Finally, image creation is suspect to various biases. For example, people of different ages, color, and ethnicities will not be equally likely to be portrayed by the AI model. Thus, users should be cautious and adjust their prompting to avoid biases.

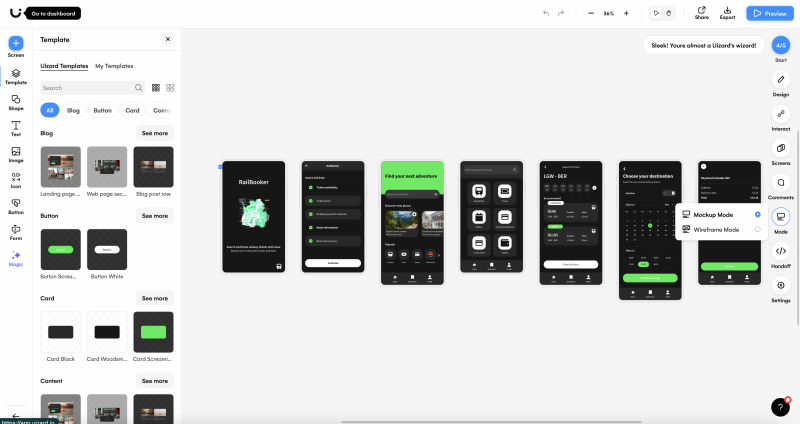

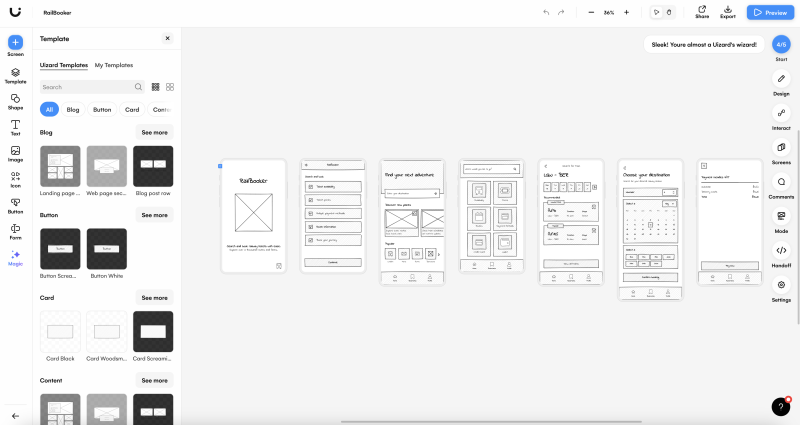

Full UI layouts are not there yet

Creating UI designs autonomously or assisting humans to create them faster is the value promise of a number of services. However, our overall opinion is that the maturity of UI design solutions is clearly less than that of text and image tools.

Tools such as UIzard and Gamma.App offer sophisticated “website from scratch” functionalities. Each takes a totally different approach, but intends to deliver complete package from a minimalist starting point. Both offer the user freedom and tools to refine the output further.

The issue is that none of the tools produces production level content. Basic web design is such a widespread but seldom required service that just mass generating new fresh starts is not really called upon. If you are however looking to prototype marketing websites, generative AI may give you a bit of custom touch and options to choose from.

Figma AI plugins

The other approach is to offer AI plugins that add new powers to existing design tools. For instance, searching for “AI” in Figma community currently produces exactly 100 hits among plugins. Most plugins are fresh, have at most a couple of thousand downloads, and have yet to be rated by users.

In our opinion, Figma AI plugins feel immature. Some of them show the potential to provide valuable features but don’t yet have the right solution for the right problem. With some plugins, it seems questionable whether they even have much AI under the hood. As such, we think they don’t yet fit professional UX designers’ workflow and can only provide random inspiration.

To highlight the scope of plugins, we name some examples. UXpilot.ai generates color and gradient combinations with accessible contrast checking. Unfortunately, the user can’t control the generation, greatly reducing its usefulness. Magician is a bundle of generative features inside a plugin, offering text-to-image image or icon and AI copywriting. Our designers found the most promise within text-to-icon generation and interactive copywriting.

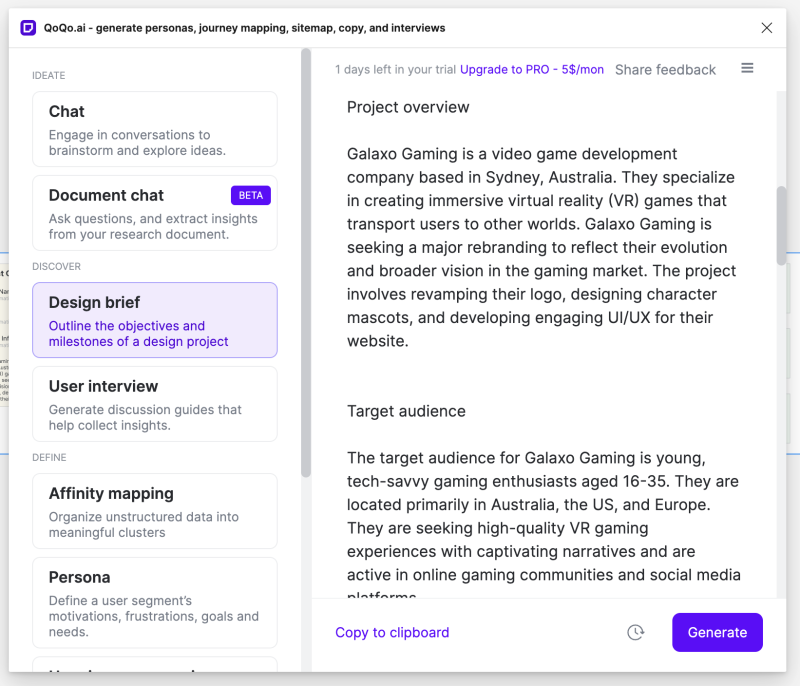

In a different direction from graphical UI design, QoQo.ai plugin uses Figma as a platform to pull together various parts of the complete UX design process: personas, information architecture, affinity mapping, user interviews, and the kind. This Swiss army knife tool raised privacy concerns, which somewhat limited our testing.

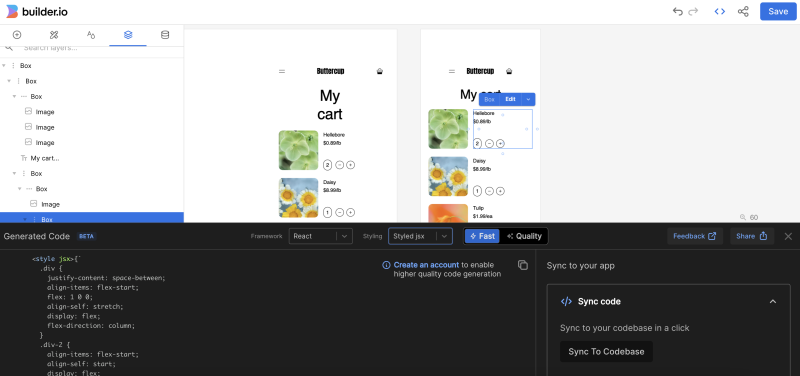

Finally, we have Builder.io which is an AI solution for creating front-end code from Figma designs. It is designed to extract UI components defined in Figma as code in a desired framework. It is also the most popular Figma AI plugin so far and works surprisingly well to a certain degree, but it too shows signs of an early age or potential for further development.

Conclusion: next week, the world of generative AI may be totally different

The fast development of AI technologies means we can expect technologists to keep users alert for many years to come. New tools are popping up daily; some may prove to be game changers. For UX design, the situation is such that for some use cases, AI can provide effective assistance, for others, it may slow down or even hinder design work. This matches the description of the “jagged technological frontier” of AI assistance in knowledge work. What’s important about the concept of the frontier is that a professional should understand their position: is AI helpful or harmful to my productivity and creativity at each step.

Currently, modalities (text, image, video, GUIs) are merging, and the categorical boundaries we’ve presented today may only hold for a short time. For instance, under the hood, video avatar tool D-ID offers a combination of Stable Diffusion and GPT 3, which gives an idea of how these tools blend.

We can expect the same from OpenAi’s next-generation flagship products, ChatGTP and Dall-E. In fact, OpenAI has already announced that ChatGPT will gain new skills in October 2023, allowing it to see, hear, and speak.

So, the expectations remain high. Some things we know today are worth paying attention to when they become public. These include the next generation of GPT and Dall-E (GPT 5 and Dall-E 3, the latter of which is already available at Bing), as well as Google’s imagen. Google’s imagen will not be released as a stand-alone product, but will be found incorporated into various Google applications in the future.

As Figma acquired an AI company called Diagram in the summer of 2023, there are high hopes that Diagram’s Genius will become a top choice among AI co-pilots in Graphical UI design.

As the tools change, new use cases become possible. One current concern for using text models in user research is data privacy and security. In future so called proprietary models in which you have full control of your data, are expected to replace currently popular public models. This will impact qualitative design research significantly when it happens.

We will definitely be watching!

Written by Lassi A Liikkanen, with big thanks for the research by Emilia Iinatti, Roosa Kotilainen, Elias Maliniemi, Juha Solla, Laura Walden, Emilia Mannila, Minna Kuivalainen and Marianna Salminen.